Roger Dannenberg, creador de Audacity y Rock Prodigy, va a ofrecer en la UPV una conferencia, un concierto y un workshop (pincha aquí para matricularte en el Workshop, todo tendrá lugar en inglés):

Artículo: Soundcool, tecnología educativa aclamada internacionalmente

Conferencia: Music Understanding and Future Music Software

El martes 8 de marzo, CAMBIO DE HORA: 12:30, en el Salón de Actos del Cubo Amarillo, edificio 8E, 3ª planta Plano Interactivo UPV. Entrada Libre hasta completar aforo: los alumnos de los cursos del MEVIC y el Máster en Música UPV tendrán preferencia sobre el resto de asistentes.

El martes 8 de marzo, CAMBIO DE HORA: 12:30, en el Salón de Actos del Cubo Amarillo, edificio 8E, 3ª planta Plano Interactivo UPV. Entrada Libre hasta completar aforo: los alumnos de los cursos del MEVIC y el Máster en Música UPV tendrán preferencia sobre el resto de asistentes.

Speaker: Roger B. Dannenberg, Carnegie Mellon University

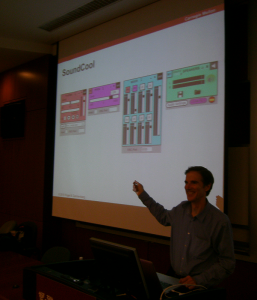

Abstract: Music understanding by computer has found many applications, ranging from music education to intelligent real-time machine performance. I will describe how current research might impact the very nature of music in the future through music understanding by computer, music education applications, computer support for popular music performance, and intelligent music displays. One of the trends in future music software is to give users greater creative power through modular “construction kits.” I will illustrate this using SoundCool and work from Carnegie Mellon on Human-Computer Music Performance.

Bio: Roger B. Dannenberg, Professor of Computer Science, Art, and Music at Carnegie Mellon University, is a pioneer in the field of Computer Music. His work in computer accompaniment led to three patents and the SmartMusic system now used by over one hundred thousand music students. He also played a central role in the

development of the Piano Tutor and Rock Prodigy, both interactive, multimedia music education systems, and Audacity, the audio editor used by millions. Dannenberg is also known for introducing functional programming concepts to describe real-time behavior, an approach that forms the foundation for Nyquist, a widely used sound synthesis language. As a composer, Dannenberg’s works have been performed by the Pittsburgh New Music Ensemble, the Pittsburgh Symphony, and at many international festivals. As a trumpet player, he has collaborated with musicians including Anthony Braxton, Eric Kloss, and Roger Humphries, and performed in concert halls ranging from the historic Apollo Theater in Harlem to the Espace de Projection at IRCAM. Dannenberg is active in performing jazz, classical, and new works.

WORKSHOP: Encuentros con Roger Dannenberg, creador de Audacity

Pincha aquí para matricularte en el Workshop

En marzo el martes 8 de 17:00 a 19:00, miércoles 9 de 17:00 a 19:00, Jueves 10 (asistencia al concierto a las 19:00) y viernes 11 de marzo 17:00 a 19:00, en el Laboratorio de Imagen y Sonido de la ETSI de Telecomunicación (primer piso del edificio 4P UPV Plano Interactivo UPV)

En marzo el martes 8 de 17:00 a 19:00, miércoles 9 de 17:00 a 19:00, Jueves 10 (asistencia al concierto a las 19:00) y viernes 11 de marzo 17:00 a 19:00, en el Laboratorio de Imagen y Sonido de la ETSI de Telecomunicación (primer piso del edificio 4P UPV Plano Interactivo UPV)

Precio superreducido para alumnos matriculados en los cursos del MEVIC ARTE SONORO Y PRODUCCIÓN DE MÚSICA ELECTRÓNICA e INTERACTION DESIGN 1, 2 Y 3, los alumnos del Máster de Música UPV, miembros y alumnos de los colaboradores ETSIT e iTEAM, los alumnos de Berklee y los alumnos y

miembros de la UPV: 45€. El resto: 90€.

El workshop tratará sobre los software de procesado de audio Audacity, el software de educación musical con móviles, tablets y kinect Soundcool y sobre composición algorítmica con el software Nyquist, todos ellos software libre que se pueden descargar en sus respectivos links. Se incluye un concierto de Dannenberg como ejemplo del que se comentarán las obras (programa detallado abajo) . El idioma de impartición será inglés.

PROGRAMA

Day 1 – Audacity

The goals of this day of the workshop are to become familiar with the free, open-source editor Audacity, which was designed and created originally by Dominic Mazzoni and Roger Dannenberg at Carnegie Mellon University. We will quickly review the basic editing operations and then cover a number of useful features.

The goals of this day of the workshop are to become familiar with the free, open-source editor Audacity, which was designed and created originally by Dominic Mazzoni and Roger Dannenberg at Carnegie Mellon University. We will quickly review the basic editing operations and then cover a number of useful features.

Audacity Basics – import, tracks, cut, copy, paste, delete, undo, envelopes, effects

Music Annotation – using label tracks to mark sound and export timing information

Advanced effects: Compression, Time Stretch, Noise removal, Ducking, Spectral Editing

Other features: Sync-lock, Spectral view, Multichannel Export

Day 2 – Algorithmic Composition (1)

The goals of this day are to learn about and explore techniques and models for algorithmic composition. We will focus on conceptual frameworks that can be used in many different programming languages, systems, or even with pencil and paper. Examples created with the Nyquist programming language will be used to demonstrate different models using real-time interactive control. I will also demonstrate Patterns, the live-coding system that will be performed in the concert.

An historical perspective

Probability Distributions

Chaos

Fractals

Tendency Masks

Markov Models

Day 3 – Algorithmic Composition (2) and Interactive Music

The goals of this day are to discuss algorithmic composition, interaction, and music performance, including a reflection on some of the pieces presented in the concert as well as other works.![]()

Forms of interaction

Examples from the concert

Examples from other work

Integrating algorithmic composition and live performance

We will wrap up by considering the combined possibilities of Nyquist, Audacity, and SoundCool for algorithmic and interactive performance.

Concierto: Roger Dannenberg in concert

Jueves 10 de marzo a las 19:00 en el Auditori Alfons Roig de la Facultad de Bellas Artes de la UPV. Entrada Libre hasta completar aforo: los alumnos del Máster en Música y los cursos del MEVIC tendrán preferencia sobre el resto de asistentes.

PROGRAMA:

Resound! Fanfares for Trumpet and Computer

Roger Dannenberg, Trumpet

I. Someone is Following You

II. Ripples in Time

III. The Bell Tower

IV. O For a Thousand Tongues to Sing

V. Hall of Mirrors

Separation Logic for Flute and Computer

Victor Maroto, Flute

Patterns

Roger Dannenberg, live coding

Improvisation for Trumpet and Computer

Roger Dannenberg, trumpet

Notes:

Resound! was composed for the Pittsburgh New Music Ensemble and premiered in the summer of 2002 in Pittsburgh, PA at the Hazlett Theater. Originally, these movements were performed individually. One was performed at the beginning of each concert in the summer festival. Each fanfare expands the solo trumpet into an ensemble using a different technique. To some extent, this is like a canon, where part of the enjoyment is how music emerges from interlocking voices. In fact the first fanfare is a strict canon created by one “live” trumpet and two delayed copies of the live trumpet sound. In “Ripples in Time,” descending arpeggios are created by delaying and pitch-shifting the trumpet tones. In “The Bell Tower,” the trumpet first records three pairs of alternating notes that are repeated by the computer though the piece. Three ascending phrases are played over the ringing bell-like tones, which then fade out to a conclusion. “O For a Thousand Tongues to Sing” (the title of which comes from a poem and hymn by Charles Wesley) uses a technique called granular synthesis. This technique chops sounds into thousands of very short intervals, or grains, and plays them back with random amounts of delay and transposition. These parameters are varied through the piece to obtain different effects. In “Hall of Mirrors,” the trumpet’s quarter notes are delayed and transposed (as the trumpet plays) to fill in with 1, 2, 3, 4, and finally 5 intermediate notes, much like the the reflections in a kaleidoscope.

In spite of all the technology, I believe the real challenge is always to write and perform music that captures the imagination and carries the listener to a new and wonderful place. I hope these little pieces will expand what we think of as “trumpet music” and perhaps open some new pathways for composers, performers, and listeners alike. The complete set of seven fanfares was recorded by Neal Berntsen for his “Trumpet Voices” CD (Four Winds).

Separation Logic is a contemporary work that consists of a written part for flute and software that activates real-time digital signal processing to “accompany” the solo part. The electronic part consists of delays, pitch shifting, granulation, spectral effects, and some algorithmic parameter generation on these effects. The piece resulted from a collaboration with flutist Lindsey Goodman and is dedicated to the memory of John Reynolds, a computer scientist and good friend of the composer. Prof. Reynolds play a key role in the development of separation logic, a formal mathematical approach to proving properties of concurrent programs.

Patterns is a live-coding performance piece using an experimental visual

language. The key idea is that objects generate streams of data and notes according to

parameters that can be adjusted on-the-fly. Many objects take other objects or even lists

of objects as inputs allowing complex patterns to be composed from simpler ones. The

interconnections of objects are indicated graphically by nested circles. The composition/improvisation is created by manipulating graphical structures in real-time to create a program that in turn generates the music. The audience sees the program while listening to the music it generates. The Patterns language used in the performance (or demo) and its implementation were created by the composer.

Improvisation for Trumpet and Computer is technically simple: The live trumpet sound is delayed and transposed in a fixed way throughout the piece. The performer improvises, responding to his own phrases as they reappear after delays.

Y después del concierto a las 20:00h….invasión audiovisual Master de Artes Visuales y Multimedia AVM en la fachada de la facultad de BBAA de la UPV, os esperamos! MUESTRA VIDEOMAPPING 2016 AVM, realizada por los estudiantes del Módulo 1 y coordinados desde la asignatura de Activismo y nuevos medios por Emilio Martínez.

Encuentros con Dannenberg

Organizan:

Soundcool, Cursos de Música Electrónica y Vídeo Creación (MEVIC), Máster en Música

Colaboran:

Generalitat Valenciana, CulturArts, Área de Actividades Culturales UPV, Ensemble Col legno, Instituto de Telecomunicaciones y Aplicaciones Multimedia (iTEAM) y ETSI de Telecomunicación.

Un comentario en “Encuentros con R. Dannenberg, creador de Audacity”

Los comentarios están cerrados.